delfi¶

delfi: A Python toolbox to perform simulation-based inference using density-estimation approaches.

The focus of delfi is Sequential Neural Posterior Estimation (SNPE). In SNPE, a neural network is trained to perform Bayesian inference on simulated data.

- To see illustrations of SNPE on canonical problems in neuroscience, read our preprint: Training deep neural density estimators to identify mechanistic models of neural dynamics.

- To get started quickly, refer to the installation instructions and tutorials. More in-depth tutorials will be added soon.

- To learn more about the general motivation behind simulation-based inference, and algorithms included in

delfi, keep on reading.

Motivation and approach¶

Many areas of science and engineering make extensive use of complex, stochastic, numerical simulations to describe the structure and dynamics of the processes being investigated. A key challenge in simulation-based science is linking simulation models to empirical data: Bayesian inference provides a general and powerful framework for identifying the set of parameters which are consistent both with empirical data and prior knowledge.

One of the key quantities required for statistical inference, the likelihood of observed data given parameters, \(\mathcal{L}(\theta) = p(x_o|\theta)\), is typically intractable for simulation-based models, rendering conventional statistical approaches inapplicable.

Sequential Neural Posterior Estimation (SNPE) is a powerful machine-learning technique to address this problem.

Goal: Algorithmically identify mechanistic models which are consistent with data.

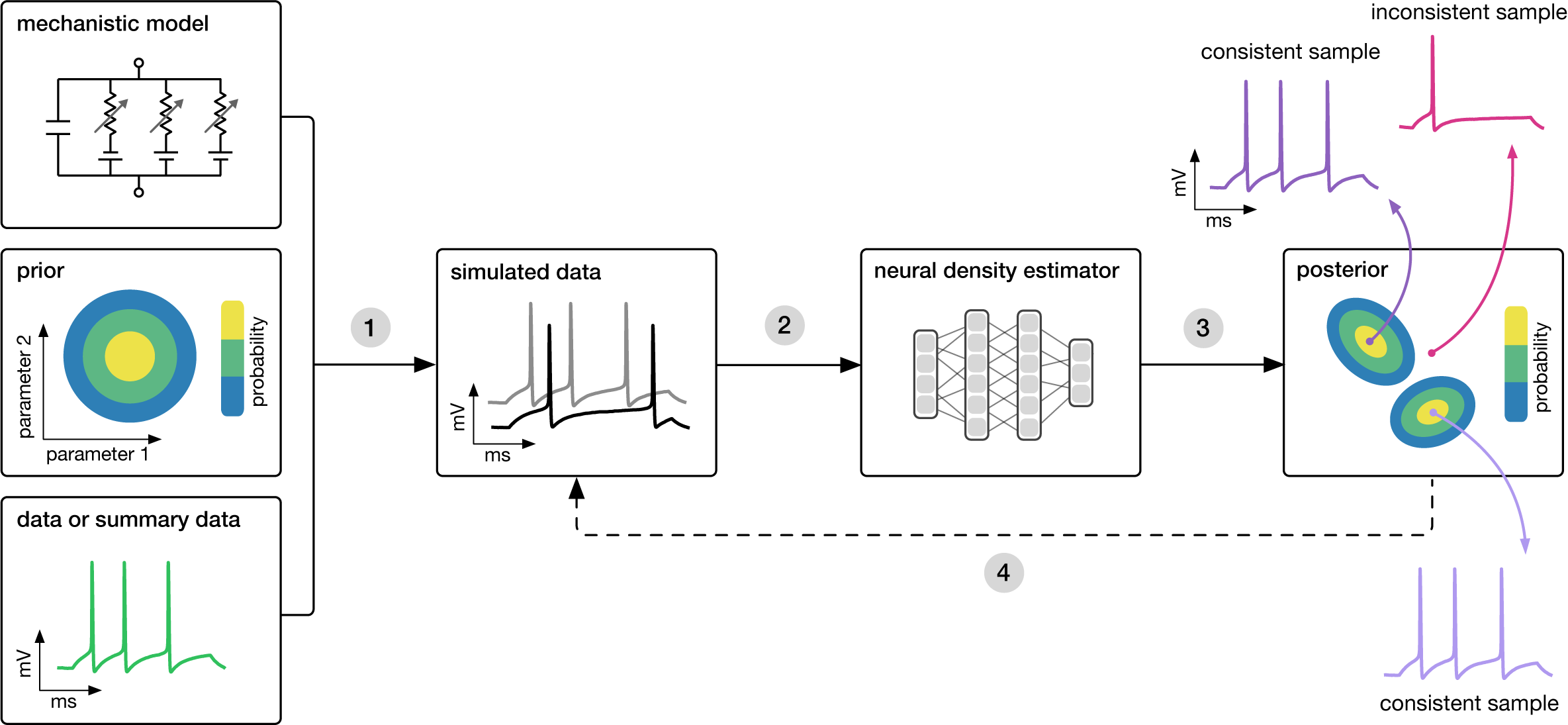

SNPE takes three inputs: A candidate mechanistic model, prior knowledge or constraints on model parameters, and data (or summary statistics). SNPE proceeds by:

- sampling parameters from the prior and simulating synthetic datasets from these parameters, and

- using a deep density estimation neural network to learn the (probabilistic) association between data (or data features) and underlying parameters, i.e. to learn statistical inference from simulated data.

- This density estimation network is then applied to empirical data to derive the full space of parameters consistent with the data and the prior, i.e. the posterior distribution. High posterior probability is assigned to parameters which are consistent with both the data and the prior, low probability to inconsistent parameters.

- If needed, an initial estimate of the posterior can be used to adaptively generate additional informative simulations.

Publications¶

Algorithms included in delfi were published in the following papers, which provide additional information:

-

Fast ε-free Inference of Simulation Models with Bayesian Conditional Density Estimation

by Papamakarios & Murray (NeurIPS 2016)

[PDF] [BibTeX] -

Flexible statistical inference for mechanistic models of neural dynamics

by Lueckmann, Goncalves, Bassetto, Öcal, Nonnenmacher & Macke (NeurIPS 2017)

[PDF] [BibTeX] -

Automatic posterior transformation for likelihood-free inference

by Greenberg, Nonnenmacher & Macke (ICML 2019)

[PDF] [BibTeX]

We refer to these algorithms as SNPE-A, SNPE-B, and SNPE-C/APT, respectively.

As an alternative to directly estimating the posterior on parameters given data, it is also possible to estimate the likelihood of data given parameters, and then subsequently draw posterior samples using MCMC (Papamakarios, Sterratt & Murray, 20191, Lueckmann, Karaletsos, Bassetto, Macke, 2019). Depending on the problem, approximating the likelihood can be more or less effective than SNPE techniques.

See Cranmer, Brehmer, Louppe (2019) for a recent review on simulation-based inference and our recent preprint Training deep neural density estimators to identify mechanistic models of neural dynamics (Goncalves et al., 2019) for applications to canonical problems in neuroscience.

-

Code for SNL is available from the original repository or as a python 3 package. ↩